An efficient approach for textual data classification using deep learning

Mohamad's interest is in Programming (Mobile, Web, Database and Machine Learning). He is studying at the Center For Artificial Intelligence Technology (CAIT), Universiti Kebangsaan Malaysia (UKM).

Abstract:

Text categorization is an effective activity that can be accomplished using a variety of classification algorithms. In machine learning, the classifier is built by learning the features of categories from a set of preset training data. Similarly, deep learning offers enormous benefits for text classification since they execute highly accurately with lower-level engineering and processing. This paper employs machine and deep learning techniques to classify textual data. Textual data contains much useless information that must be pre-processed. We clean the data, impute missing values, and eliminate the repeated columns. Next, we employ machine learning algorithms: logistic regression, random forest, K-nearest neighbors (KNN), and deep learning algorithms: long short-term memory (LSTM), artificial neural network (ANN), and gated recurrent unit (GRU) for classification. Results reveal that LSTM achieves 92% accuracy outperforming all other model and baseline studies.

(Abdullah Alqahtani, H. Khan, Shtwai Alsubai, Mohemmed Sha, Ahmad S. Almadhor, Tayyab Iqbal, Sidra Abbas)

Discussion:

The paper "An efficient approach for textual data classification using deep learning" brings attention to the potential of machine and deep learning models, such as LSTM and GRU, for text classification tasks. Interestingly, the authors use the Titanic dataset for their experiments, which primarily contains structured data and limited text fields. This choice raises intriguing questions about how text-focused models can be adapted for datasets that are not traditionally text-heavy. Could this approach point to new ways of extracting or representing textual features from structured data? Or does it highlight the importance of selecting datasets that align more closely with a study's goals? This opens up a larger conversation about balancing creativity in research with ensuring methodological alignment, inviting us to reflect on how we choose and use datasets in machine learning studies.

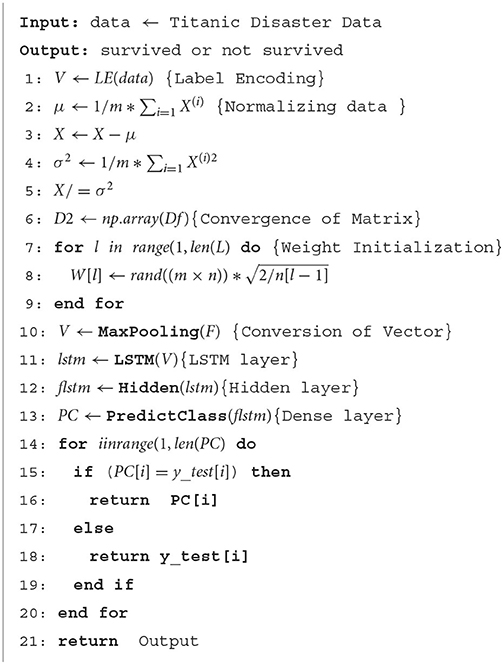

Lab Works:

1. V ← LE(data) {Label Encoding}

2. μ ← (1/m) * Σ(i=1 to m) X^(i) {Normalizing data}

3. X ← X - μ

4. σ² ← (1/m) * Σ(i=1 to m) (X^(i))²

5. X ← X / σ²

6. D2 ← np.array(Df) {Convergence of Matrix}

7. for l in range(1, len(L)) {Weight Initialization}

1. W[l] ← rand((m × n)) * √(2 / n[l-1])

8. end for

9. V ← MaxPooling(F) {Conversion of Vector}

10. lstm ← LSTM(V) {LSTM layer}

11. f_lstm ← Hidden(lstm) {Hidden layer}

12. PC ← PredictClass(f_lstm) {Dense layer}

13. for i in range(1, len(PC)) do

1. if PC[i] == y_test[i] then

1. return PC[i]

2. else

1. return y_test[i]

3. end if

14. end for

15. return Output

https://colab.research.google.com/drive/1NLegXg7WTNgajyU1XgX1g-Qpe9r6Fwqp